Humanoid Phantom MK-1 soldier robots have been delivered to Ukraine to evaluate their effectiveness.

The company Foundation told Time that two robots were sent to Ukraine in February.

Ukraine has become the world’s main testing ground for weapons manufacturers, including Western startups.

Among such companies is the American company Foundation, which plans to deploy the Phantom robots to the front line to refine the technology.

Company co-founder Mike LeBlanc says that what he has seen in Ukraine has only strengthened his belief in the value of humanoid soldiers.

“Humanoid soldiers could be invaluable for resupplying and conducting reconnaissance, especially in places drones cannot reach, such as bunkers. With a heat signature similar to a human’s, robots like Phantom could also confuse the enemy. We need something that can interact with all of these things,” LeBlanc says.

He adds that the aim is for the robot to be able to use “any weapon that a human can use.”

Phantom is currently undergoing testing at factories and shipyards – from Atlanta to Singapore.

The company already has research contracts totaling $24 million with the U.S. Army, Navy, and Air Force.

Tests are also planned for the U.S. Marine Corps, during which Phantom will be trained to place explosives on doors to help troops breach buildings more safely.

LeBlanc also says the company is in ‘very close contact’ with the U.S. Department of Homeland Security regarding the possible use of Phantom to patrol the country’s southern border.

“Humanoid soldiers are a natural extension of existing autonomous systems, such as drones,” he notes.

The company believes that the widespread use of humanoid robots could eventually eliminate the tactical advantage of either side in conflicts – similar to nuclear deterrence – and potentially reduce the risk of escalation.

Current Pentagon protocols state that automated systems can engage only after receiving authorization from a human. Foundation emphasizes that it plans to follow this principle with the Phantom MK-1 as well.

Meanwhile, AI-powered drones in Ukraine are already capable of independently identifying and targeting objectives.

For LeBlanc, who completed more than 300 combat flights with the U.S. Marine Corps, what he saw during a trip to Ukraine with the Phantom was ‘truly shocking.’

“This is a full-scale war of robots, where the robot is the primary fighter and humans only provide support. It’s the complete opposite of what it was during my service in Afghanistan: back then, people were the main force and technology was just a tool.”

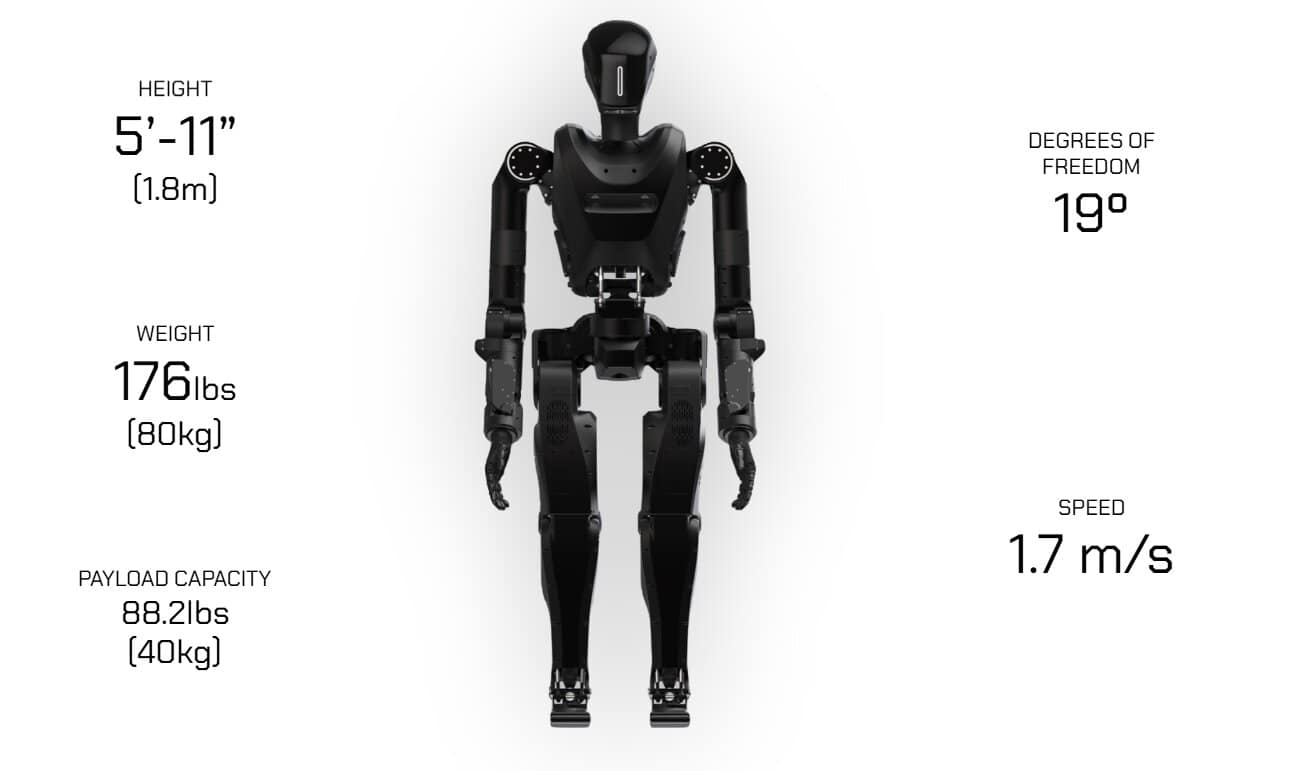

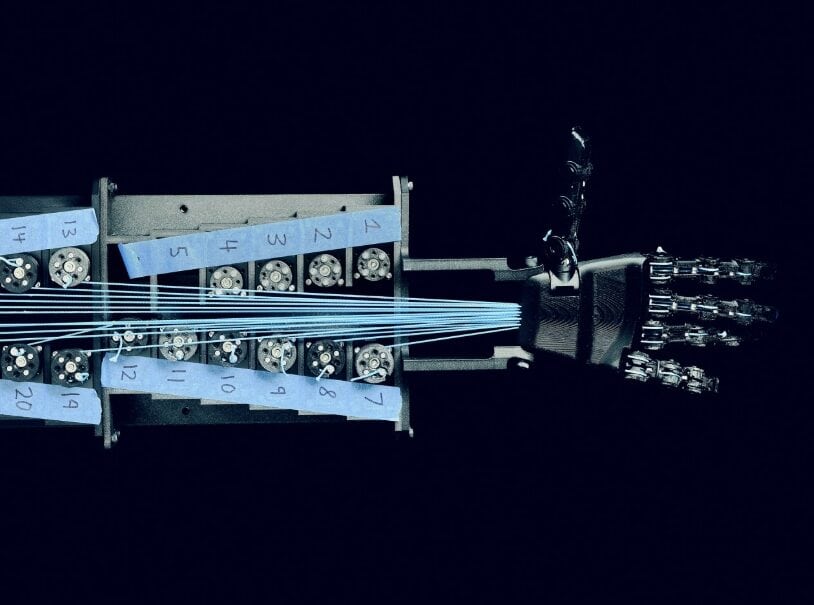

Humanoid robots are heavy and expensive, require regular recharging, can break down, and often lose their balance.

A humanoid’s movement is powered by about 20 motors, and each of them must operate flawlessly.

Deploying humanoids alongside regular troops could also create additional risks.

“If you fall next to a baby, you know how to land without causing harm. Can a humanoid do the same?” says Pralad Vadakkepat, associate professor at the National University of Singapore and founder of the Federation of International Robotics and Football Associations.

Some risks are already apparent. Captured drones are a significant source of sensitive data, as they function like smartphones that store or transmit detailed intelligence.

Drones can also be tampered with by intercepting their radio frequencies. A hacked humanoid soldier introduces a whole new set of risks. The enemy could potentially take control of a robot fleet through software ‘backdoors’ and use them against their creators.

“What you’re seeing now is just the first clumsy attempt to show how robots could conduct our wars,” LeBlanc says.

Another major risk is a humanoid’s ability to accurately assess a situation. Concern arises from the fact that artificial intelligence is still far from perfect.

AI systems can make mistakes known as ‘hallucinations,’ where generative tools confidently produce false or misleading information that is not based on their training data.

“With these large language models, we cannot fully explain how they make decisions. It’s unacceptable to have lethal autonomous systems that occasionally decide to ‘hallucinate,’” AI experts say.

AI models can also suffer from algorithmic bias or behavioral drift. Over time, as a system ‘learns’ in real-world conditions, its logic may diverge from the original ethical constraints.

Despite recent advances, there is still much work to be done. During a visit by TIME journalists, one of the Phantom robots fell several times with loud crashes.

The Phantom MK-2 is expected to debut in April with a range of upgrades: consolidated electronics to reduce the risk of short circuits, waterproofing, larger battery packs, and the ability to carry loads of up to 80 kg.

Last year, French military forces began testing robot dogs alongside regular dogs during training exercises.

Підтримати нас можна через:

Приват: 5169 3351 0164 7408 PayPal - [email protected] Стати нашим патроном за лінком ⬇

Subscribe to our newsletter

or on ours Telegram

Thank you!!

You are subscribed to our newsletter